March 23, 2026

Artificial Intelligence is increasingly influencing how electronic systems sense, process and respond across industrial, medical, automotive and consumer applications. From cloud infrastructure to compact, low-power edge devices, AI is becoming an integral part of modern electronic system design. One relevant development for embedded system design is the shift of selected AI workloads from centralized cloud infrastructure to local edge platforms. This supports intelligent processing closer to the data source, with the potential to reduce response times, lower network dependency and improve privacy.

Embedded AI and Edge AI are closely connected concepts, but they emphasize different parts of intelligent system design. Embedded AI refers to AI functionality integrated into embedded hardware platforms, enabling local data processing, inference and decision support in devices such as microcontrollers, processors, SoCs, FPGAs, smart sensors, cameras, gateways and intelligent modules.

Edge AI refers to the broader deployment of artificial intelligence close to where data is generated, across devices, machines, vehicles, medical equipment, industrial systems, gateways and IoT endpoints. Instead of sending all data to a centralized cloud platform, Edge AI supports local or near-local analysis, filtering and decision support. This can reduce latency, lower bandwidth requirements, improve privacy and support system autonomy in environments with limited or unreliable connectivity.

This shift from cloud-centric intelligence to intelligent endpoints is driven by practical engineering constraints such as latency, power consumption, bandwidth limitations, connectivity, privacy and data ownership. From an embedded electronics perspective, Edge AI is about deciding where intelligence should be placed in the data chain: directly in the sensor node, on an embedded processor, in an AI accelerator, on a system-on-module, in an edge gateway, in the cloud or in a hybrid architecture combining several of these layers.

In practice, Embedded AI is an enabling technology for Edge AI. Embedded AI provides the local processing capability inside the device, while Edge AI describes the broader system architecture in which intelligence is distributed across endpoints, gateways, cloud platforms and user interfaces. For many real-world applications, a hybrid architecture is often practical: time-critical or privacy-sensitive processing can be handled locally, while cloud platforms can support fleet management, long-term analytics, model updates and system optimization.

Once Embedded AI functionality is integrated into the device, the key design question for engineers and R&D teams is not only which AI model to use, but where inference should run in the system: inside the sensor node, on a microcontroller, on an embedded processor, in an AI accelerator, on a system-on-module, in an edge gateway or in the cloud.

This decision determines how data moves through the system and directly affects sensors, processing hardware, software frameworks, memory usage, latency, power consumption, connectivity, security and long-term reliability.

From a hardware perspective, AI in embedded electronics is not a single function block, but a complete processing pipeline. Sensor data must be captured, conditioned, preprocessed and converted into meaningful features before inference can take place.

The result of that inference then needs to trigger a decision-support output, alert, communication action, user feedback or physical actuation. Where the inference step is placed, in the sensor node, embedded processor, edge gateway or cloud, is one of the key architectural decisions in Edge AI system design.

Local AI processing is particularly relevant for systems that require real-time response, low power consumption or reliable operation in environments with limited connectivity. By processing data closer to the source, embedded AI can reduce network dependency, improve system-level efficiency and support faster local response or decision-support workflows at the edge.

When AI inference moves closer to the device, the design challenge shifts from “which model do we use?” to “how do we make the complete system work reliably within real-world constraints?”

Important trade-offs include:

Processing performance

Does the application need simple anomaly detection, audio keyword spotting, image classification, object detection, sensor fusion or transformer-based inference?

Power consumption

Can the device run from mains power, or does it need to operate from a small battery for months or years?

Memory and storage

Can the model, runtime, buffers and sensor data fit within available SRAM, DRAM, flash or embedded storage?

Latency

Does the system need to react in milliseconds, or is delayed analysis acceptable?

Thermal behavior

Can the enclosure dissipate heat, especially in fanless, sealed or industrial environments?

Connectivity

What data must stay local, and what data can be sent to a gateway or cloud platform?

Security

How are firmware, AI models, data and updates protected over the full product lifecycle?

Lifecycle and validation

Can the selected hardware, software stack and AI model be supported, tested and maintained for the expected lifetime of the product?

These trade-offs become especially important when AI functionality is implemented in constrained embedded devices.

Depending on the required functionality and target market, embedded AI devices often operate within constraints for power, compute capacity and memory. In consumer electronics, many smartphones, laptops and edge devices now include dedicated AI acceleration through NPUs, GPUs or specialized SoC architectures.

At the same time, embedded AI is increasingly being deployed in smaller, power-constrained devices such as wearables, smart home products and IoT endpoints.

These systems are typically designed to handle tasks such as image recognition, speech recognition, natural language processing, health monitoring and sensor-data analytics for decision support, automated response or user feedback.

The evolution of Embedded AI and Edge AI is supported by several converging technology trends: more efficient processors, optimized AI models, improved sensor integration, mature development frameworks and increasingly capable connectivity solutions.

Embedded AI implementations can range from rule-based systems with basic pattern recognition to neural-network-based systems that use deep learning algorithms for more complex inference tasks.

The growth of the Internet of Things has also been an important catalyst for embedded AI. As connected devices generate increasing volumes of data, local processing and decision support become more relevant. Embedded AI addresses this challenge by supporting local data analysis and event-based response, while reducing bandwidth requirements and limiting unnecessary data transfer to the cloud.

Optimized AI models are essential for reducing power consumption and supporting AI functionality in portable, battery-operated devices. Local processing can also improve scalability in distributed systems by reducing dependence on centralized processing infrastructure.

Hardware building blocks

Hardware building blocks include microcontrollers, microprocessors, digital signal processors, FPGAs, GPUs, NPUs and dedicated AI accelerators. Component selection is typically driven by workload requirements, latency targets, power budget, memory capacity, thermal constraints and system cost.

Edge AI Architecture levels

Edge AI systems can be implemented across different performance and power levels, from ultra-low-power endpoints to embedded processors, AI accelerators, system-on-modules, single-board computers and edge gateways. The appropriate architecture depends on where data is generated, how fast the system must respond, how much power is available, how much data can be transmitted and whether the system must continue operating without reliable cloud connectivity.

At the lowest power level, AI can run on microcontrollers that combine traditional MCU functionality with DSP extensions, vector processing or neural-network acceleration. These devices can support always-on functions such as keyword spotting, gesture recognition, anomaly detection, vibration monitoring, sensor fusion and biometric signal analysis. By processing data locally and waking the rest of the system only when a relevant event is detected, these architectures can help reduce average power consumption.

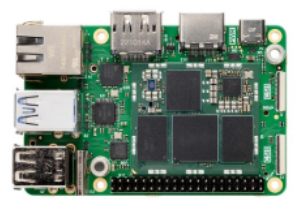

At higher performance levels, embedded processors, AI accelerators, SBCs and SOMs can support more demanding workloads such as real-time video analytics, multi-sensor perception, HMI intelligence, robotics, smart cameras and industrial gateways. These platforms typically combine CPU, GPU, NPU or dedicated AI acceleration with memory, high-speed I/O, connectivity and software support. This makes them practical building blocks for product teams that want to add Edge AI capabilities without designing the full high-density processing platform from scratch.

An effective Edge AI architecture requires co-optimization of hardware, firmware, AI models and software toolchains. Memory hierarchy, SRAM size, memory bandwidth, data movement, peak inference power, thermal behavior and deterministic latency can be just as important as raw processor performance. Treating AI as an afterthought can lead to inefficient designs, while early hardware/software co-design can support improved performance, lower power consumption and more reliable real-world operation.

Software

The software stack typically includes firmware, an operating system or real-time operating system, device drivers, middleware, AI runtimes and pre-trained models optimized for deployment on the target hardware.This stack should be optimized for deterministic performance, efficient memory use and reliable interaction with sensors, communication interfaces and hardware accelerators.

AI Models and Frameworks

AI frameworks optimized for edge deployment have improved the ability to run inference workloads on resource-constrained embedded platforms. Common model architectures used in embedded AI include:

Typical optimization techniques include quantization (such as using 8-bit integers instead of 32-bit floats), pruning (removing unnecessary neurons or weights) and knowledge distillation, where smaller models are trained to approximate the behavior of larger models.

These frameworks also help developers utilize available hardware acceleration, including GPUs, NPUs, DSPs and dedicated AI accelerators, through optimized runtime kernels and hardware-specific execution paths. Some frameworks also support limited on-device training or model adaptation, which can enable personalization while reducing the need to transfer sensitive data to the cloud.

For many embedded AI systems, sensor data is the primary input for local analysis and decision-support. Typical input data may include temperature, motion, video, audio, heart rate and blood oxygen levels. Sensor selection is critical because the quality, timing and reliability of input data directly affect AI model performance. Relevant sensor categories include:

Vision sensors

Visible-light and thermal cameras support computer vision tasks such as object detection, classification, people counting and anomaly detection.

Perception sensors

Time-of-Flight (ToF) sensors and stereo cameras enable depth perception for robotics, autonomous systems, access control and spatial awareness applications.

Audio sensors

Microphones and audio-processing ICs support speech recognition, sound classification, keyword detection and acoustic environment monitoring. Microphone arrays are increasingly used for sound localization and audio-source separation using techniques such as beamforming.

Motion and position sensors

Motion and position sensors, including IMUs (Inertial Measurement Units), accelerometers, gyroscopes and magnetic sensors, support movement tracking, gesture recognition, robotics and drone stabilization.

Rotational encoders provide precise position and speed feedback in robotics, motor-control and automation applications.

Environmental sensors

Temperature, humidity and pressure sensors are used in smart buildings, agricultural monitoring, HVAC systems and environmental control applications.

Air quality sensors

Air-quality sensors, including CO2 and particulate-matter sensors, support environmental health monitoring, building automation and industrial safety applications.

Touch and force sensors

Tactile sensors enable robotic systems to detect pressure, grip force, texture and physical interaction.

Force-torque sensors allow robotic arms to measure applied forces during gripping, assembly or human-machine interaction.

Biometric sensors

Fingerprint scanners, ECG sensors and SpO₂ sensors can support AI-enabled monitoring, healthcare and authentication systems, depending on the application and validation requirements.

The interaction between sensors, processing hardware, software and AI models can support closed-loop systems in which data is captured, analyzed and translated into alerts, control actions or user feedback.

Embedded AI and Edge AI applications differ by market, but many share similar design requirements: reliable sensor input, deterministic latency, efficient inference execution, secure data handling and robust operation over the full product lifecycle.

Healthcare

|

Embedded AI and Edge AI can support local monitoring, anomaly detection and clinical decision-support workflows, depending on validation level, workflow integration and applicable regulatory requirements. Key design challenges include low power consumption, sensor accuracy, data privacy, medical-grade reliability, cybersecurity and regulatory compliance. Wearables

Medical Imaging

Telemedicine Fall Detection

|

Automotive

|

Embedded AI and Edge AI can support real-time perception, driver assistance, in-cabin monitoring and safety-related functions in modern vehicles, provided that system design, validation, cybersecurity and functional-safety requirements are addressed.

Key design challenges include deterministic latency, functional safety, sensor fusion, cybersecurity, thermal management, long-term reliability and alignment with applicable automotive standards. Autonomous Vehicles ADAS

In-Vehicle Monitoring

|

Industrial IoT (IIoT)

|

Embedded AI and Edge AI can support real-time monitoring, predictive maintenance, quality inspection and adaptive process control in industrial environments. Key design challenges include robust operation in harsh conditions, reliable sensor input, deterministic response times, secure connectivity, long product lifecycles and integration with existing industrial protocols.

Smart Manufacturing and Industry 4.0

Autonomous Robots

Energy Management

|

Consumer electronics

|

Embedded AI and Edge AI can support more responsive, context-aware and power-efficient user experiences in smart devices and connected products. Key design challenges include low power consumption, compact hardware design, privacy protection, cost control, fast response times and integration with cloud and mobile ecosystems.

Smartphones and Smart Home Devices

Smart Appliances

|

Agriculture

|

Embedded AI and Edge AI can support data-driven farming, livestock monitoring and precision agriculture by enabling local analysis of environmental, crop and animal-health data. Key design challenges include low-power operation, outdoor reliability, wireless connectivity, sensor accuracy, long battery life and robust performance in variable environmental conditions.

Livestock Monitoring

Precision Farming |

Embedded AI and Edge AI have evolved from rule-based embedded processing into intelligent edge systems capable of real-time sensing, inference and local response. By combining efficient hardware, optimized software frameworks and AI models designed for edge deployment, embedded AI is expanding what can be achieved within constrained edge devices.

As hardware acceleration, model optimization and edge software ecosystems continue to mature, Embedded AI and Edge AI are becoming more relevant in the design of intelligent, connected products.

However, embedded AI also introduces several engineering challenges that need to be addressed early in the design process:

Resource constraints

Limited memory, processing capacity, thermal budget and battery capacity require efficient AI models and carefully selected hardware platforms. Design and validation engineers need to balance model accuracy, latency, power consumption and hardware limitations.

Model optimization

Reducing model size while maintaining application-level accuracy is a complex task. Techniques such as quantization, pruning and knowledge distillation need to be applied with attention to latency, power consumption, memory use and validation requirements.

Security risks

Embedded devices can be vulnerable to physical and cyber-attacks. Secure boot, encryption, authentication, access control and secure firmware-update mechanisms are important for protecting long-term system and data integrity. This is especially relevant in safety-related applications such as automotive, healthcare and industrial automation.

Interoperability

Ensuring reliable communication between diverse devices, protocols and ecosystems remains an important integration challenge.

Development complexity

Developing AI functionality for embedded systems requires specialized knowledge and tools. Engineering teams need to understand AI model development, embedded software, hardware acceleration, data pipelines, validation and cybersecurity. This creates a need for multidisciplinary teams that can connect software, hardware and system-level design.

Cost

Higher-performance embedded AI hardware can increase system cost. Cost control remains important, particularly for high-volume IoT, consumer and industrial endpoint applications.

While many embedded AI developments are already being deployed in practical edge devices, several emerging research areas may influence future system architectures. These topics are not all directly applicable to current embedded designs, but they provide insight into where AI technology may evolve.

TinyML

TinyML focuses on running machine learning models on highly resource-constrained devices such as microcontrollers and ultra-low-power sensors. This can support always-on intelligence in battery-powered and compact products, where power consumption, memory size and processing capacity are critical design constraints.

Edge Transformer Models

Transformer-based models are increasingly being optimized for edge deployment. While originally associated with large-scale AI systems, compact transformer architectures are being explored for vision, audio, language and sensor-processing applications where contextual understanding is required.

Event-Based Vision

Event-based vision uses sensors that detect changes in a scene rather than capturing full image frames at fixed intervals. This can reduce data volume, latency and power consumption, making it relevant for robotics, industrial automation, motion detection and high-speed vision applications.

Sensor Fusion

Sensor fusion combines data from multiple sensor types, such as cameras, radar, ToF sensors, IMUs, microphones and environmental sensors. By combining different data sources, embedded AI systems can support more robust perception and more reliable system responses in complex real-world environments.

AI Model Compression

AI model compression techniques, including quantization, pruning and knowledge distillation, are important for deploying AI models on embedded hardware. These methods reduce model size and computational requirements while aiming to preserve application-level accuracy and real-time performance.

Secure Edge AI

As more intelligence moves to the edge, security becomes increasingly important. Secure Edge AI focuses on protecting models, data and device integrity through secure boot, encryption, authentication, trusted execution environments and secure firmware updates.

Federated Learning

Federated learning allows multiple devices or systems to collaboratively improve AI models while keeping data decentralized. This can be relevant for privacy-sensitive applications in healthcare, industrial systems, smart buildings and connected consumer devices.

Neuromorphic Computing

Neuromorphic computing is inspired by the structure and function of the human brain. It aims to process information in a highly parallel and energy-efficient way, making it a long-term research direction for low-power AI and event-driven sensing applications.

On-Device Generative AI Inference

On-device generative AI inference is an emerging area where compact AI models are deployed locally to generate or interpret text, images, audio or multimodal data. While still challenging for embedded systems, advances in AI accelerators, memory optimization and model compression are making selected generative AI workloads more relevant at the edge.

Quantum AI *

Quantum AI combines concepts from quantum computing and artificial intelligence. In theory, quantum computing could accelerate certain AI-related tasks, such as optimization, simulation or complex data processing. However, Quantum AI remains largely experimental and is not yet directly applicable to most embedded AI systems. For this reason, Quantum AI should be viewed as a longer-term research direction rather than a practical design consideration for current embedded products.

* Quantum AI remains a longer-term research topic and is not yet a practical design consideration for most embedded AI products.

For engineers and R&D teams working on near-term embedded AI applications, topics such as TinyML, sensor fusion, model compression, secure Edge AI and on-device inference are more directly relevant than longer-term research areas such as neuromorphic computing or quantum AI.

Whether deployed in embedded systems or server-based platforms, AI creates opportunities while introducing complex technical, ethical and societal challenges.

AI development continues to evolve, and engineering teams need scalable, reliable and application-specific solutions that can be validated in real-world operating conditions. The TOP-electronics team supports customers and partners by connecting engineering teams with relevant technologies, supplier expertise and design-in support for embedded AI applications.

The following section complements the TOP Tech Talk with selected technologies available through the TOP-electronics portfolio. These solutions support embedded AI system development, from edge processing and embedded computing to storage, sensing, audio and connectivity.

Through technology suppliers including EdgeCortix, Geniatech, Grinn, Ambiq, Quectel, Silicon Motion, Cirrus Logic and Ingenic, TOP-electronics supports embedded AI designs with solutions for edge processing, embedded computing, connectivity, storage, audio processing and intelligent sensing.

With a focus on energy efficiency, compact design, lifecycle support and technical support, TOP-electronics helps customers integrate AI capabilities into IoT and edge devices where local processing, connectivity, power consumption and lifecycle support are key design factors.

Back